Weird Critical Thinking [Trenchant Edges]

Avoiding Delusion on the Fringe

Welcome back to the Trenchant Edges, the dailyish newsletter where we tilt at windmills and hope they don’t tilt back.

Estimated reading time: 10 minutes, 9 seconds. Contains 2033 words

I’m Stephen Fisher, your host, and researcher. Have curiosity (and a good back brace), will travel.

Today I want to go back a bit to the original reason we brought up UFOs in the first place: As an edge-case to practice critical thinking on.

I made this point in my original piece but it bears repeating: UFOlogy is a janky mix of quasiscientific research, folktales/urban legends, and supernaturalist religiosity.

People from across the political spectrum from hardline Argentine Trotskyists like J Posadas to far-right cryptofascist postbirchers like Behold The Pale Horse’s author William Cooper have been drawn into UFOlogy’s pull.

This means that you’ll pretty naturally run into a very wide range of ideation on the subject, which is good for grist to mill.

The Process Behind Brainstorming

If you’ve read too many books on creativity as I have, you’re familiar with the notion of brainstorming: to create an individual or group to think of as many semi-relevant ideas about a subject as possible. No judgment or criticism, just seeing a situation through as many lenses as possible. This bigger pool of ideas than you’d normally have with more discerning thinking can then be expanded on by other participants or winnowed down with more rigorous thinking.

The underlying thing brainstorming does is provoke ideation, the process of generating ideas and bringing them to the surface of consciousness.

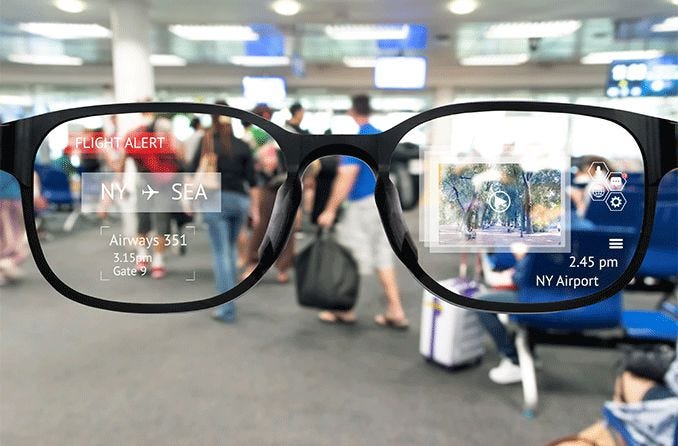

Ideation is a basic function of cognition, a kind of built-in augmented reality.

Ideation comes with a few caveats though: The quality of each idea generated can vary wildly and there are almost always some caveats when it comes to rigor or accuracy. Basically, most ideas are wrong or useless.

Sufficiently wild ideation, unanchored from consensual reality is the most obvious symptom of psychosis, which should give you a sense of how serious this process is: It can literally drive one insane.

In fact, much mental illness comes paired with “Intrusive thoughts” which are just ideation turned against the ideator. I myself have a long history of thoughts of harming myself or others.

So this isn’t a trivial skill here, it’s one of the signals people use to draw a line between mental health and mental illness.

I say it’s a skill because people are wildly divergent in what and how they ideate and most people don’t really engage with it as itself unless they start a contemplative practice such as meditation or serious prayer.

Ideation is so important to our story of weirdness because one of the first traps most people fall into is believing what they think about paranoid or conspiracy topics. I can free associate (another name for wild ideation) and storymake about those associations as well as Alex Jones.

The difference, aside from my lack of vitamins to sell, is I’m not putting on a vaudeville show about right-wing paranoia. I’m kinda trying to understand the world as it is and as it could be.

So when I ideate I usually follow it up by beating the new idea with one of my critical thinking tools until it dissolves or proves itself more sturdy.

Let’s talk about those tools.

A Crash Course into Critical Thinking

No, that’s not a typo.

I tend to think “serious” thinking is mainly defined by the dual acts of trying to annihilate an idea or set of ideas and building them back better.

It’s a kinetic process or at least that’s how it feels to me.

The purpose of critical thinking is to avoid delusion. Which is a combination of false beliefs and emotional investment. The harder you believe something that ain’t so, the more dangerous it is to live with that delusion.

Everyone’s delusional some of the time and most delusions are minimally harmful.

Actually, I think everyone’s delusional all the time but that’s a different topic all together.

The most powerful delusions aren’t necessarily things you believe in the most, but things you associate with the most of your other beliefs.

White supremacy in the US is a good example of this. Few white Americans proactively believe that white people are the master race, nazi style. But that assumption is so thoroughly built into so many places in our society that changes that might threaten that material relationship feels like threats to white Americans.

This is true for white people who proactively believe the opposite. I’ve felt that sense of threat thousands of times myself. The only real defense against it is awareness and understanding.

“Oh, this is my identity doing that thing to protect itself that leads to the opposite of what I want.”

So, what can we use to avoid buying into delusions?

That article on totse.com has some good places to start. Let’s run through them real quick.

You can think of these as different kinds of hammers to hit a claim with to see how it responds. If the whole thing collapses, you can tell it wasn’t made of much more than words. It also might bend or break or completely pass the test.

A quick disclaimer: When in conversation with someone who has a profoundly different model of the world than you, it’s very common to think you’re delivering an unstoppable knockout punch/mic drop only to have them shrug it off and ignore it.

Sometimes this is because of bad faith, but more often it’s because you’re actually working on vastly different kinds of information density.

Ex. An Atheist might deliver a perfect disproval to the reason a theist has given for their faith.

But their faith isn’t really built on the semantics of that one sentence chain. The chain of logic involved is more of a map of a vastly complex blend of thoughts and feelings and years of experience.

So where the Atheist feels like they’ve given a death blow, the theist feels like the atheist is kind of just missing the point. This is the asymmetry of context and anyone communicating must work very hard to overcome it. This is actually the core of why I don’t care about public debates, but that’s another topic.

James Lett’s FiLCHeRS

Back to the article. So Lett has stripped critical thinking down to six filters to put any new information through to see if it’s roughly scientifically valid.

“Apply these six rules to the evidence offered for any claim, I tell my students, and no one will ever be able to sneak up on you and steal your belief. You'll be filch-proof. “

College professors are delightfully tacky.

His filters are:

Falsifiability

Logic

Comprehensiveness

Honesty

Replicability

Sufficiency

#1

Falsifiability is the old Karl Popper cliche. What evidence would disprove this idea? If you can’t find an answer, maybe you should rethink the question.

Now, Lett takes a hardline stance towards falsifiability claiming that a statement that isn’t falsifiable is meaningless. I don’t think his logic is sufficient to make that claim, but I definitely think falsifiability is a very useful filter.

For one thing, it demonstrates both courage and determination in the claimant. Avoiding falsifiable statements is a sign someone doesn’t want their ideas challenged.

And if they don’t want their ideas challenged and they’re selling something…. well, that tells you who they are. Handy information to have.

#2

Logic is about an argument being both valid and sound.

A valid argument is one in which, if the premises are true, the conclusions are true. This is where those big lists of logical fallacies come in. Don’t forget the fallacy fallacy!

A sound argument is one whose premises are true. So, a sound and valid argument is one that is true according to the available information.

There’s an issue Lett doesn’t bring up here about assumptions: One of the most effective ways to lie is to assume something you don’t explicitly state or argue for. So you have to keep an eye out for hidden information in the structure of an argument.

Logic is also one half of my favorite property: Consistency, which is lacking contradiction. Logic reveals the internal consistency of an idea and empirical experimentation reveals the external consistency of an idea.

#3

Comprehensiveness is tricky with UFOs because holy shit are there so many sightings and stories. It’s the property of addressing all the available and relevant information.

Spotting comprehensiveness is hard because it involves having a wider range of examples than who you’re evaluating, which may be difficult.

Luckily, with the internet, we can always ask, “Is there data we’ve failed to account for?” and almost always find out the answer is yes.

Seeing where someone draws the lines on completeness is VERY telling about their intentions. Charles Murray, for example, considered a handful of studies in a half dozen or so African nations as comprehensive and representative of African and black IQ scores without racial bias. That several of these studies were from apartheid South Africa, one of the most racist places on earth, and several more weren’t even IQ tests, are clear examples of his biases.

Likewise, I once got an A in my first statistics course by showing a chart proving that in 2050 the leader of Russia would be 8ft tall by cropping my data so it only showed the progressive height gain between Czar Nicholas the second and Boris Yeltsin. But my data had to be skewed to avoid Vlad Putin’s paltry 5’6” height.

#4

Honesty just means to recognize when your evidence is bad and you can’t make a positive claim rather than rationalizing it.

This is trickier than it sounds in practice because every theory goes through moments of apparent disconfirmation which go onto provide important refinements in its content.

In practice, it’s a lot easier to look at how someone handles criticism of various kinds. If there are patterns of constant deflection and abuse, you’re probably dealing with someone who isn’t honestly reevaluating their ideas with new information.

Evaluations of someone’s honesty and sincerity will always run towards the subjective, so you need to be open to admitting you got it wrong either way.

Disparaging someone’s honesty/sincerity is also one of the best ways to poison the well against them, so keep an eye out for people who do it constantly. If someone tells you everyone but they are lying to you, well, they’ve left out at least one liar.

#5

Replicability is another core science ideal. Occasionally, by chance, you do get 500 coin flips landing heads in a row.

So the more times you try it the less likely such an event is.

This one is more about keeping an eye out more for anecdotes or single experiments being given way too much weight.

The more consistent and repeatable the evidence is the less likely that it was done by chance or rigged or any of the other issues other than a serious flaw with methodology.

Stable results are better results, heh, probably.

#6

Sufficiency is the principle that a deviation from consensus must come with proportionately more evidence to prove it.

Obviously, I think this one is a bit loaded but I think it’s still a worthy filter. Not so much to rule out alternative points of view, but to kind of gauge if they’re robust enough to take over.

Of course, this also depends on what consensus we’re discussing. The economic policy Washington Consensus might be seen as vastly flimsier than Newton’s physical laws.

And more general opinions about politics could be seen as even flimsier.

The point of sufficiency is it’s on the person deviating from the norm to really bring evidence their deviation is worthwhile. This obviously has some issues if applied really strictly, but I think it’s still worth considering.

So there you have it

Obviously, these six filters are just kind of an introduction to critical thinking and we haven’t really done much to apply them to weird shit yet. But it’s 10am and we’re about to hit 2000 words so it’s probably time to take a break so we can come back and develop this stuff further.

We haven’t even touched on the two hardest questions of all: Who do you trust? and What do you give serious scrutiny to?

For all the criticisms people have of the “mainstream”, almost no one on the fringe has a better answer than, “Well, we have these institutions where smart people gatekeep expertise and so the people who get past those filters are trustworthy.”

Usually, it’s just,” whoever agrees with us.”

And that’s crap no matter what side you’re on.

Anyway, see you soon.